Big Tech leaks your chats to the government and advertisers

Your chats with LLMs have no legal protection. Every single one of you is exposed to these fundamental risks:| Problem | Description | Example |

|---|---|---|

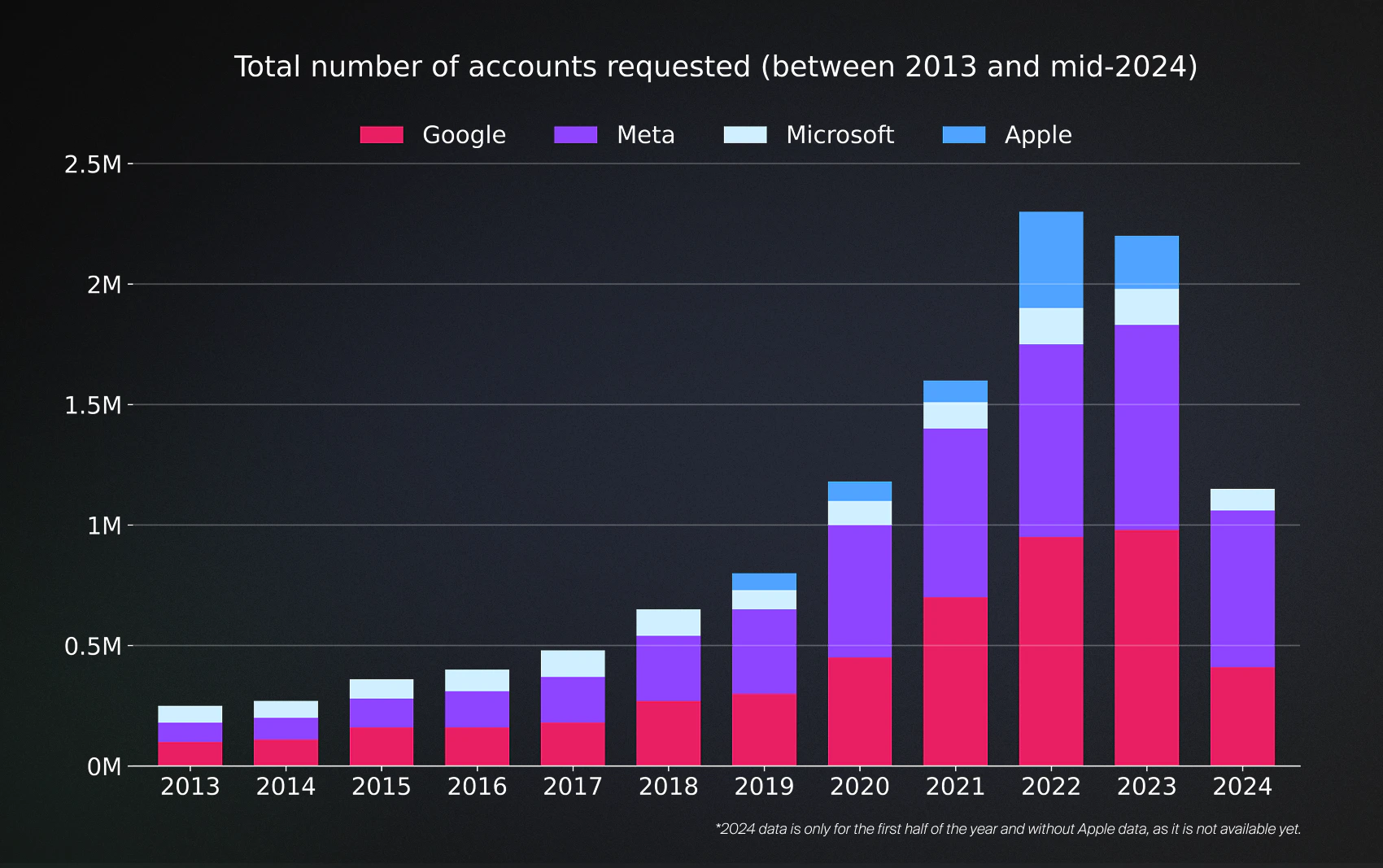

| Government surveillance | In the last 10 years, Meta, Microsoft and Google alone complied with nearly 9M government queries. 35% of those were coming from the US agencies. | You messed up with your tax report. Now IRS is auditing you. One request is all it takes to get your chatGPT logs. Most of those reading it would have info on tokens they bought and even questions on avoiding taxes. That would be enough to put your away for 3-5 years. |

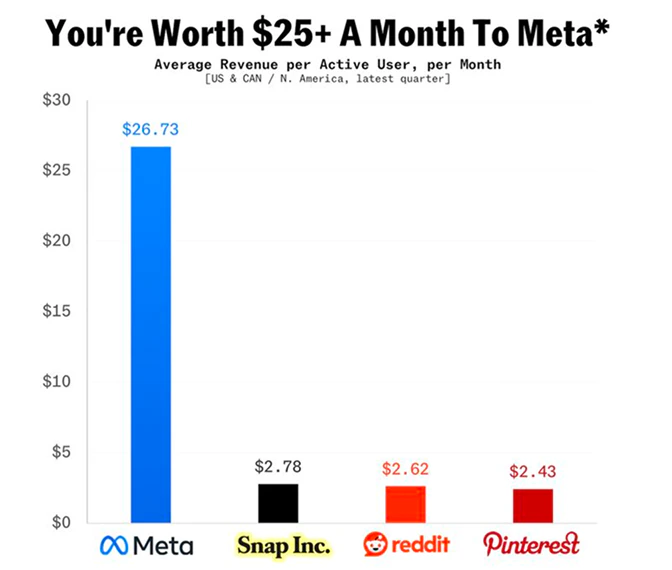

| Model training | Meta is already using your chat data to target their ads. xAI is planning to do the same. OpenAI plans the same. | You asked chatGPT to plan a trip to the countryside with your family. LLM recognizes emotional importance of the trip for you and increases the price of tickets by 20%. It knows you can afford it and it knows you’ll pay. |

| No control | There is no privacy guarantees when the provider owns the runtime, the memory and the payment rails. | Cloud providers can quantize the model, distill it, hot-swap to a cheaper model, drop its IQ, make it more manipulative, run experiments on your data, throttle output or raise prices or even turn off your API access completely. |

Government requests for user data

Government requests for user data

Meta makes $25/m from monetizing your attention

Meta makes $25/m from monetizing your attention

Listen to Sam Altman himself talk about it

Listen to Sam Altman himself talk about it